Speech Recognition

The technology is essential for creating accessible services, increasing digital inclusion, and underpins many modern services such as automatic subtitling, transcription systems and voice assistants.

Types of Speech and Transcription

There are a variety of different types of speech and transcription, including:

- Speech while reading – processing speech that reads pre-written text. It contains more formal language and correct vocabulary.

- Spontaneous speech – processing speech from normal and natural conversations. It includes pausing, repeating words or restarting sentences and speaking less formally with occasional English words (‘code switching’)

- Whole audio transcription – processing short or long audio recordings

- Live transcription – convert speech to text as you speak

- Verbatim transcription – recording every word, pause, and phrase exactly as they were spoken

- Easy-to-read subtitle transcription – create subtitles from speech that are easier to follow

- Meeting transcription – record multi-speaker conversations with the ability to recognize voices and label who is speaking

Speech Recognition Resources for Developers

Local Speech Recognition API Server

You can now run local Welsh speech recognition on your own hardware. It can power real-time voice assistants, transcribe meetings and broadcasts, translate Welsh speech into English, and automatically generate subtitles. Click here for more information.

Models

Our Welsh and English speech recognition models are available for developers to use in two ways:

- Through the unit’s API center – for easy integration into your systems and services

- Locally – by downloading from the Hugging Face website and running them on your own local server or computer:

Data

Our data collections for speech recognition are also readily available from the Hugging Face website.

Our Development Work

As the data increases, we have used it to train and develop our own speech recognition models, following the exciting developments in the field. Our first step was using

When developing resources for the Welsh language, we are also aware of the need to be able to transcribe English well. Many Welsh speakers use both languages in their daily lives, and it is important that our systems can deal with both languages effectively – either separately or in conversations that change between the two languages.

Practical Applications with Speech Recognition

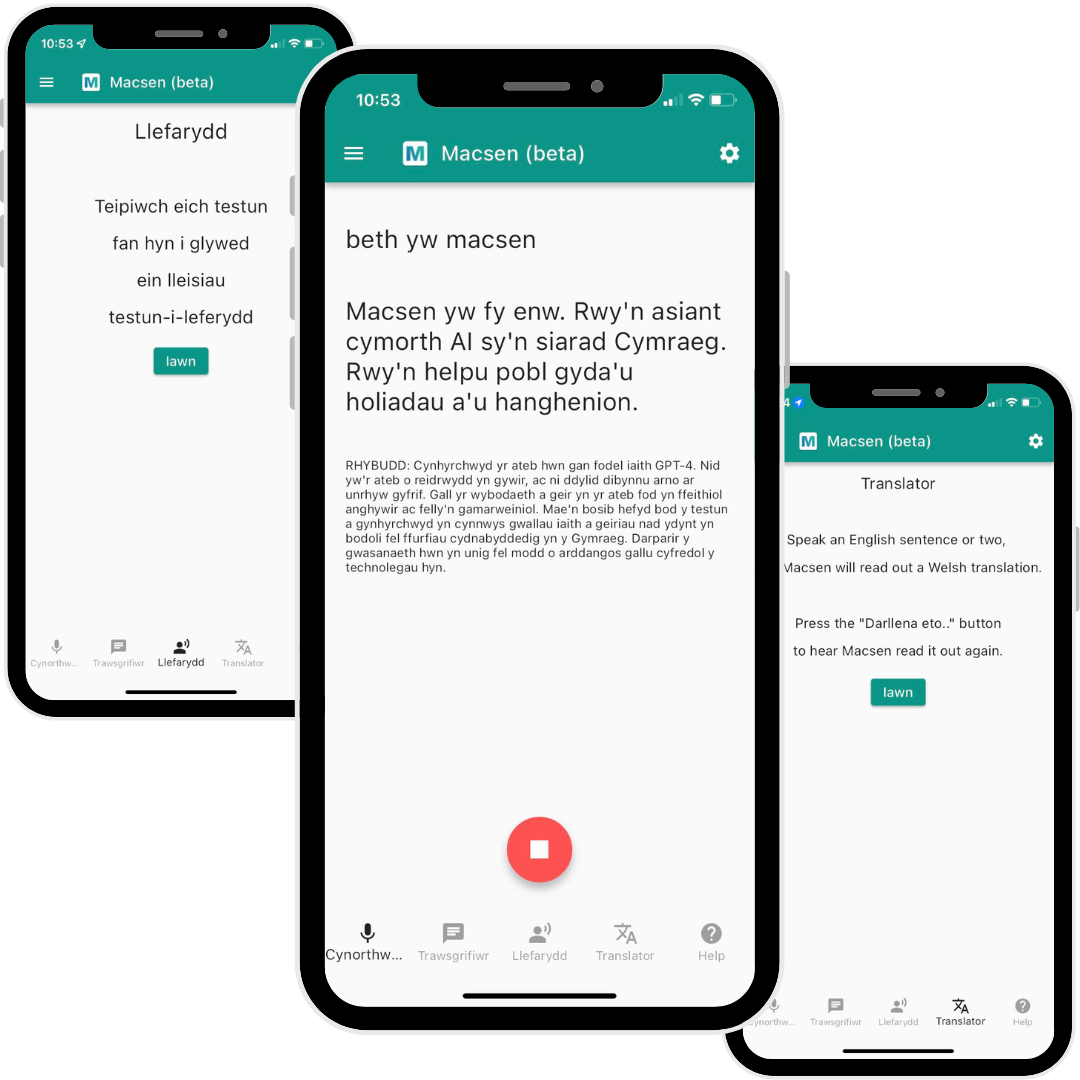

- Trawsgrifiwr – an online service that allows users to automatically transcribe their audio recordings

- Macsen – Welsh voice assistant prototype package

Collaboration with Partners

This is not solitary work. We have worked closely with a number of other organizations and developers, including:

- Mozilla Foundation through the Common Voice project

- The Wales-Brittany AI-language central Network partners

- Amazon and the Welsh Local Government Association (WLGA)

- Many podcast producers and other speech audio producers who have contributed their data and given us permission to share it as transcribed by us

These collaborations have been essential for gathering diverse data, sharing expertise, and ensuring that our resources meet the real needs of users and organizations.

Looking to the Future

Our work continues to evolve as we embrace the exciting possibilities of emerging technologies. We are developing speech recognition systems that can

We are also investigating large language models (LLMs) that can understand and process spoken instructions in Welsh, opening new doors for natural interfaces and Welsh voice assistants.

If you would like to know more about our work, or if you are interested in collaborating with us, we would love to hear from you. Contact us to discuss how we can work together to further develop Welsh speech technologies.